Azure Databricks Artifacts Deployment

This article is intended for deploying Jar Files, XML Files, JSON Files, wheel files and Global Init Scripts in Databricks Workspace.

Overview:

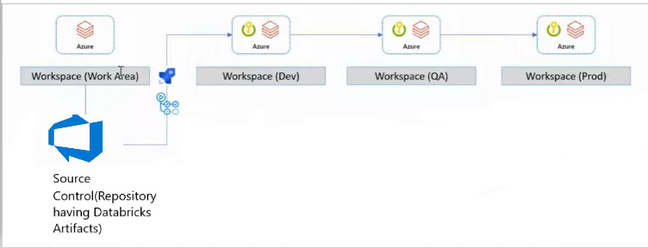

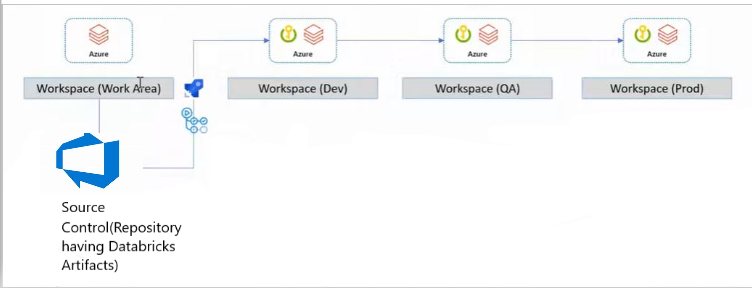

- In Databricks Workspace, we have notebooks, clusters, and data stores. These notebooks are run on data bricks clusters and use datastores if they need to refer to any custom configuration in the cluster.

- Developers need environment specific configurations, mapping files and custom functions using packaging for running the notebooks in Databricks Workspace.

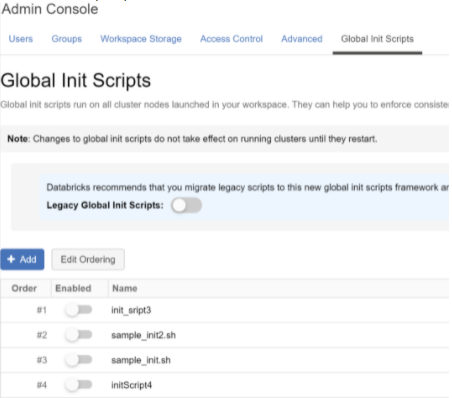

- Developers need a global Init script which runs on every cluster created in your workspace. Global Init scripts are useful when you want to enforce organization-wide library configurations or security screens.

- This pipeline can automate the process of deploying these artifacts in Databricks workspace.

Purpose of this Pipeline:

- The purpose this pipeline is to pick up the Databricks artifacts from the Repository and upload to Databricks workspace DBFS location and uploads the global init script using REST API's.

- The CI pipeline builds the wheel (.whl) file using setup.py and publishes required files (whl file, Global Init scripts, jar files etc.) as a build artifact.

- The CD pipeline uploads all the artifacts to DBFS location and also uploads the global Init scripts using the REST API's.

Pre-Requisites:

- Developers need to make sure that all the artifacts that need to be uploaded to Databricks Workspace need to be present in the Repository (main branch). The location of the artifacts in the repository should be fixed (Let us consider’/artifacts’ as the location). The CI process will create the build artifact from this folder location.

- The Databricks PAT Token and Databricks Target Workspace URL should be present in the key vault.

Continuous Integration (CI) pipeline:

- The CI pipeline builds a wheel (.whl) file using the a setup.py file and also creates a build artifact from all files in the artifacts/ folder such as Configuration files (.json), Packages (.jar and .whl), and shell scripts (.sh).

- It has the following Tasks:

- Building the Wheel file using setup.py file (Subtasks below):

- using the latest python version

- updating pip

- Installing wheel package

- Building the wheel file using command "python setup.py sdist bdist_wheel"

- This setup.py file can be replaced with any python file that is used to create .whl files

- Copying all the Artifacts(Jar,Json Config,Whl file, Shell Script) to artifact staging directory

- Publishing the Artifacts from the staging directory.

- The CD Pipeline will then be triggered after a successful run.

- The YAML code for this pipeline is included in next page with all the steps included.

CI- Pipeline YAML Code:

Continuous Deployment (CD) pipeline:

The CD pipeline uploads all the artifacts (Jar, Json Config, Whl file) built by the CI pipeline into the Databricks File System (DBFS). The CD pipeline will also update/upload any (.sh) files from the build artifact as Global Init Scripts for the Databricks Workspace.

It has the following Tasks:

- Key vault task to fetch the data bricks secrets(PAT Token, URL)

- Upload Databricks Artifacts

- This will run a PowerShell script that uses the DBFS API from data bricks -> https://docs.databricks.com/dev-tools/api/latest/dbfs.html#create

- Script Name: DBFSUpload.ps1

Arguments:

Databricks PAT Token to access Databricks Workspace

Databricks Workspace URL

Pipeline Working Directory URL where the files((Jar, Json Config, Whl file) are present

3.Upload Global Init Scripts

- This will run a PowerShell script that uses the Global Init Scripts API from data bricks - > https://docs.databricks.com/dev-tools/api/latest/global-init-scripts.html#operation/create-script

- Script Name : DatabricksGlobalInitScriptUpload.ps1

Arguments:

Databricks PAT Token to access Databricks Workspace

Databricks Workspace URL

Pipeline Working Directory URL where the global init scripts are present

- The YAML code for this CD pipeline with all the steps included. and scripts for uploading artifacts are included in the next page.

CD-YAML code:

DBFSUpload.ps1

DatabricksGlobalInitScriptUpload.ps1

End Result of Successful Pipeline Runs:

Global Init Script Upload:

Conclusion:

Using this CI CD approach we were successfully able to upload the artifacts to the Databricks file system.

References:

- https://docs.databricks.com/dev-tools/api/latest/dbfs.html#create

- https://docs.databricks.com/dev-tools/api/latest/global-init-scripts.html#operation/create-script

Published on:

Learn moreRelated posts

Integration Testing Azure Functions with Reqnroll and C#, Part 5 - Using Corvus.Testing.ReqnRoll in a build pipeline

If you use Azure Functions on a regular basis, you'll likely have grappled with the challenge of testing them. In the final post in this serie...

Integration Testing Azure Functions with Reqnroll and C#, Part 4 - Controlling your functions with additional configuration

If you use Azure Functions on a regular basis, you'll likely have grappled with the challenge of testing them. In the fourth of this series of...

Integration Testing Azure Functions with Reqnroll and C#, Part 3 - Using hooks to start Functions

If you use Azure Functions on a regular basis, you'll likely have grappled with the challenge of testing them. In the third of a series of pos...

Integration Testing Azure Functions with Reqnroll and C#, Part 2 - Using step bindings to start Functions

If you use Azure Functions on a regular basis, you'll likely have grappled with the challenge of testing them. In the second of a series of po...

Integration Testing Azure Functions with Reqnroll and C#, Part 1 - Introduction

If you use Azure Functions on a regular basis, you'll likely have grappled with the challenge of testing them. In the first of a series of pos...

Announcing Azure MCP Server 2.0 Stable Release for Self-Hosted Agentic Cloud Automation

Azure MCP Server 2.0 is now generally available, delivering first-class self-hosting, stronger security hardening, and a faster foundation for...

Azure Security: Private Vs. Service Endpoints

When connecting securely to a platform service such as a key vault or an Azure storage account, Microsoft recommends using a private endpoint ...

Give your Foundry Agent Custom Tools with MCP Servers on Azure Functions

Learn how to connect your MCP server hosted on Azure Functions to Microsoft Foundry agents. This post covers authentication options and setup ...

Azure Data Factory Tips for Reliable Microsoft Dynamics 365 CE and Dataverse Integrations

Reliable integrations between Microsoft Dynamics 365 Customer Engagement and external systems can become challenging. This is especially true ...

Scalable AI with Azure Cosmos DB: Tredence Intelligent Document Processing (IDP) | March 2026

Azure Cosmos DB enables scalable AI-driven document processing, addressing one of the biggest barriers to operational scale in today’s enterpr...