Bring your own storage to Azure Maps

When working with geospatial data in Azure Maps for geofencing or indoor maps purposes, until today, you needed to upload your data directly to our backend services, where Azure maps would store, process, and make it available. However, if you already use Azure and have data in an existing storage account, you no longer need to upload your data again and create a duplicate in Azure Maps. With the new Azure Maps Bring your own storage services, you can now securely register your existing data and give Azure Maps access. Meaning you have all the control over your data, where it is stored, and how it is encrypted, especially where you have requirements that guarantee that your data stays in a specific geographic location, like in Europe.

Give Azure Maps permission to access your data.

The Azure Maps Data Registration service was created to give you more control over your data. Azure Maps Data Registry currently only connects to data stored in Azure Blob Storage, but we will expand this capability to other data sources later. There are three basic steps in the Azure Maps Data Registration process:

- Create or identify the Azure Storage account that will hold the data.

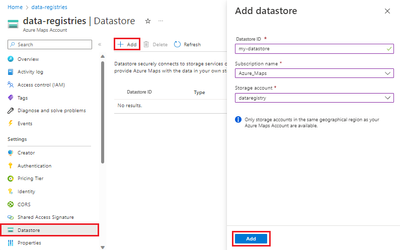

- Create an Azure Maps datastore and add a link to your Azure Storage account.

- Assign roles and permissions to the Azure Maps datastore (by using a managed identity) to access your data.

Azure Maps only has access to data you have registered using the Azure Maps Data Registration APIs. Access is handled by Role Based Authentication (Role Based Access Control aka RBAC) using a managed identity (system-assigned or user-assigned). When you register your data, Azure Maps does some verification steps and creates a hash code to confirm the data is not modified. Whenever you wish to update your data, you should call the same API to reregister your data and generate a new matching hash code.

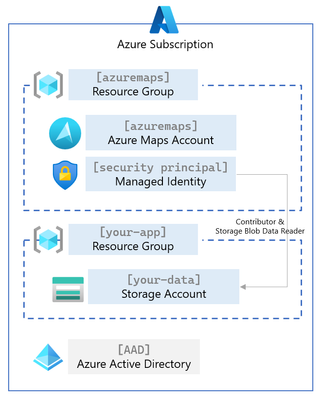

Azure Maps Registration Service Architecture

In the following architecture diagram, you see two resource groups, one for your Azure Maps account and the second for your application and data. Before Azure Maps has access to your storage account, you need to add a datastore to Azure Maps that references that storage account. You must also assign access rights to a Security Principal (a managed identity) with Contributor and Storage Blob Data Reader privileges. The last step is registering the data inside the storage account to Azure Maps. The Azure Maps Data Registry APIs are documented here.

When Azure Maps process your data, such as an indoor map using our Azure Maps Creator services, the outcome (a tile set) is still stored in the Azure Maps account and will not be returned to your storage account. The Azure Storage and Azure Maps accounts can only be linked when in identical geographic boundaries, which guarantees that your data will not leave your selected geographic boundaries.

So why use Azure Maps Data Registration service?

The key reasons are owning, locating, and controlling access to the data that is important to you, your product, and your customers. We created this capability based on customer requests to bring their data rather than duplicate it in Azure Maps. Now you can register your data directly with Azure Maps.

Did you know you can use the Azure Storage Explorer to upload data to and from a Storage Account?

Published on:

Learn moreRelated posts

Copilot Code Reviews for Azure Repos

Over the last several years, we have encouraged customers to move their repositories from Azure Repos to GitHub to take advantage of the lates...

Enterprise Live Migrations: Moving from Azure DevOps Repo to GitHub with minimal disruption

Over the last several years, we’ve encouraged customers to move their repositories from Azure Repos to GitHub to take advantage of the latest ...

Enterprise Live Migrations: Moving from Azure DevOps Repo to GitHub with minimal disruption

Over the last several years, we’ve encouraged customers to move their repositories from Azure Repos to GitHub to take advantage of the latest ...

Introducing Azure HorizonDB - PostgreSQL

Run enterprise Postgres workloads on Azure HorizonDB with around 3x the throughput of self-managed deployments — zone-resilient by default, no...

Azure DevOps and GitHub: Journeying into the AI Era

AI is changing how software gets planned, built, and reviewed. As teams adopt agentic development, the platform underneath those workflows mat...