Connecting Azure to Mainframes with Low Latency

Many organizations are running their mission critical workloads on the mainframe and would greatly benefit by incorporating the mainframe in their strategic cloud initiatives. With Ensono’s Cloud-Connected Mainframe for Microsoft Azure solution, clients can leverage cloud native services from Microsoft Azure for their mission critical mainframe workloads and enable a large-scale migration to the cloud for surrounding servers that have latency sensitivity to the mainframe.

Solution Overview:

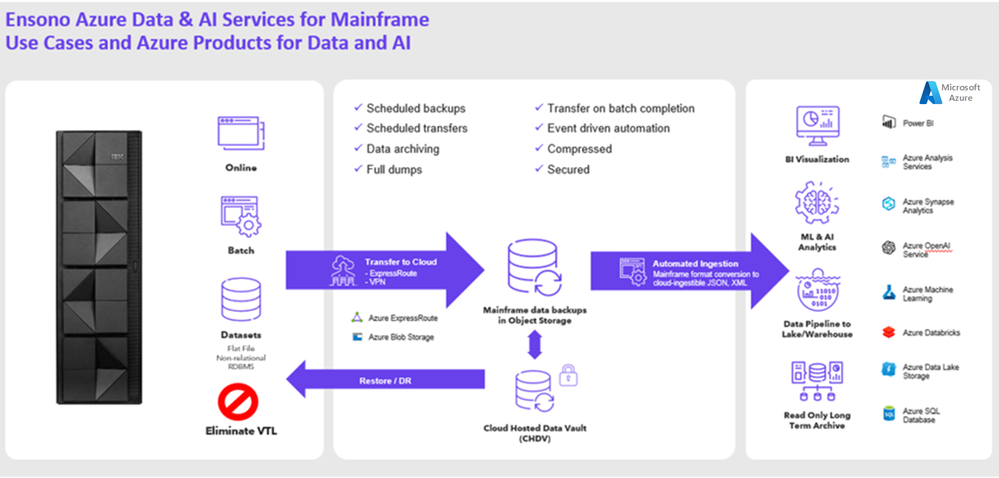

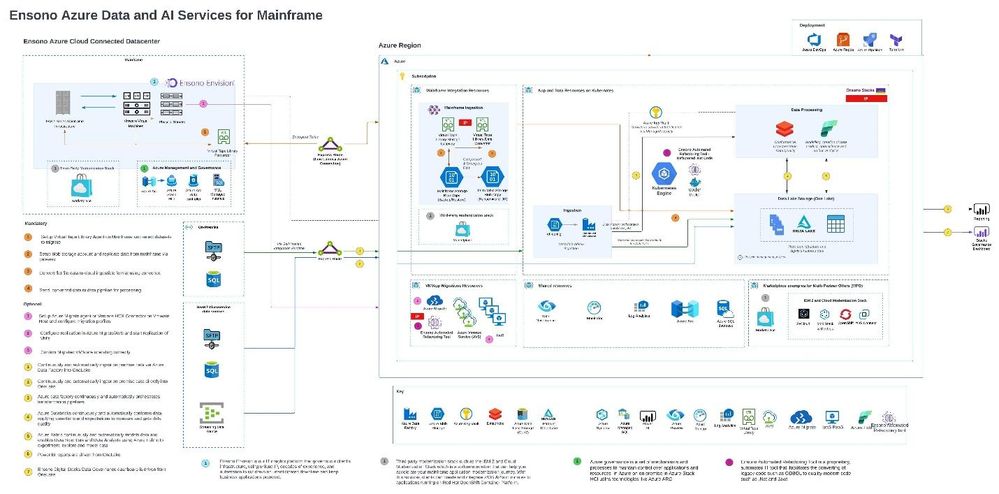

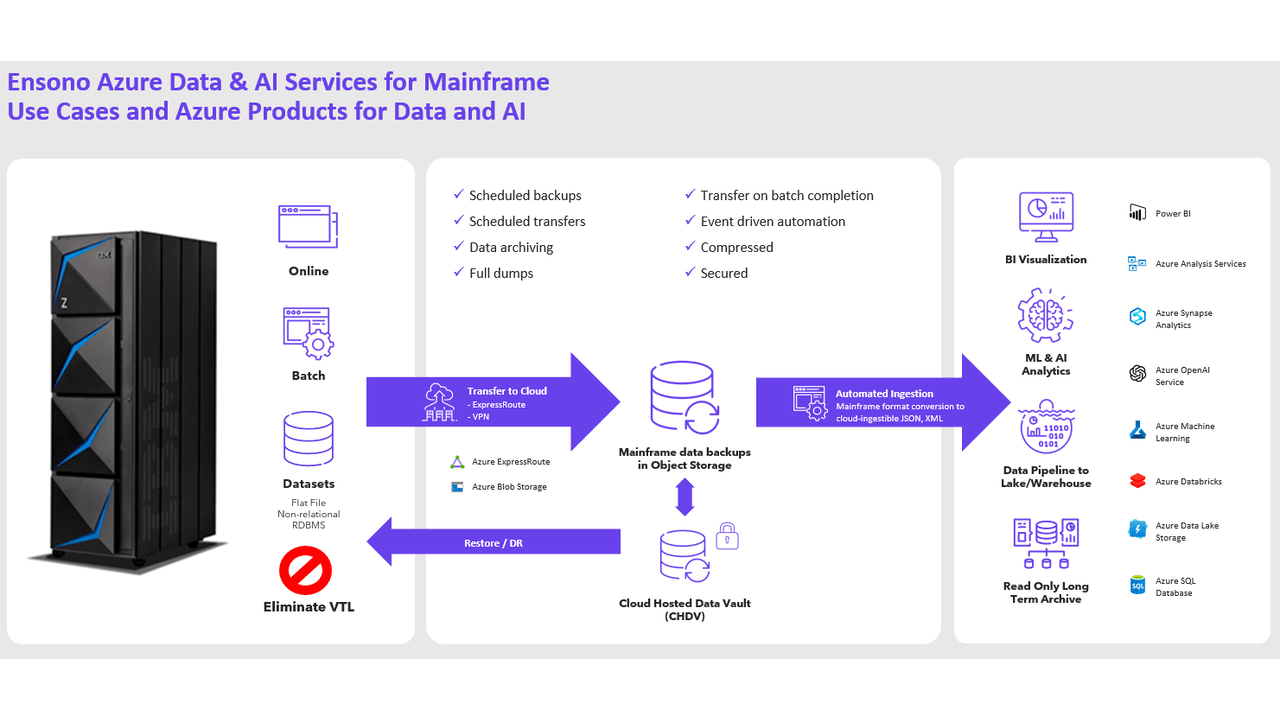

Figure 1 Ensono Azure Cloud Connected Mainframe

Ensono’s Cloud-Connected Mainframe for Microsoft Azure is a solution that bridges the gap between legacy and cloud applications by bringing the mainframe closer to Azure. The solution leverages a low latency connection to Azure (inclusive of Ingress and Egress) and a well architected Azure reference architecture that enables various cloud connected scenarios, such as data migration, application modernization, and AI analytics. The offering is available through the Azure Marketplace and is Azure Benefit Eligible, enabling customers to incorporate mainframe components into their Azure Roadmap.

Advantages:

- Ability to write and monitor data to multiple sites and copies.

- Optimization of physical storage devices for mainframe

- Access to historical, mission critical data for analytics

- Removal of latency obstacles for cloud migration

- Access to additional Cloud services for the mainframe

- Improvement on near real-time mainframe data access

- Reduction of IT silos – DevOps for Mainframe, APIs in Azure for Mainframe, etc.

Solving Latency + Egress Concerns for Surrounding Ecosystem

By leveraging an ExpressRoute Local connection and Ensono’s Remote Hosted Mainframe solution, we can minimize latency between the Azure environment and the mainframe to as little as 2.5ms or less, depending on the solution configuration. Network connectivity is inclusive of ingress, egress charges and creates a predictable cost model for scenarios where a client needs to transfer large amounts of data between the mainframe and Azure. Unlocking surrounding workloads of their latency constraint will allow clients to migrate more workloads to the cloud and to reimagine the configuration of the mainframe environment.

For example, more tape data can be stored in Azure and possibly even the entire VTS replaced due to proximity. Further in this blog, we will expand on this scenario in greater detail.

Replacing or Optimizing the Mainframe Virtual Tape Storage (VTS) in Azure

Below is an overview of the steps involved for directing mainframe VTS to Azure. The solution for each client may vary slightly based on the use case and products selected. Below is an overview of the Mainframe Tape to Azure configuration:

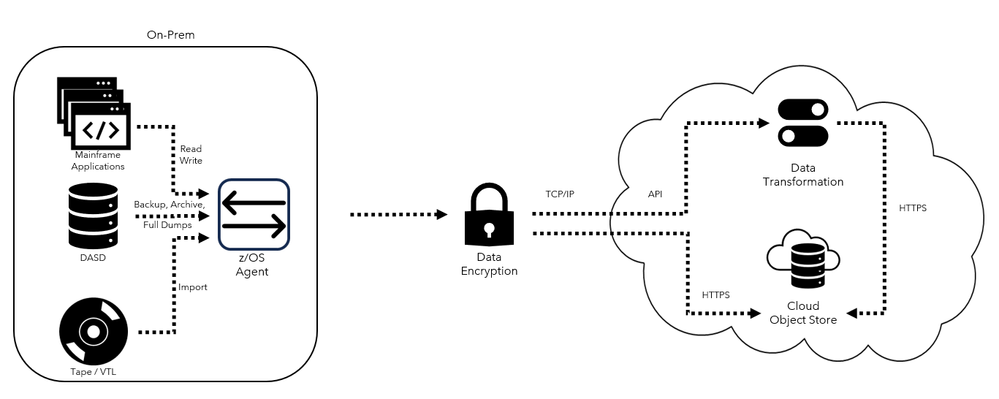

Figure 2 Overview of Tape to Cloud Solution

Install Software Driven Virtual Tape Solution

The cloud VTS software is a z/OS started task that sends mainframe data directly to Azure Blob Storage. The data is sent over in an encrypted manner to Azure Blob storage.

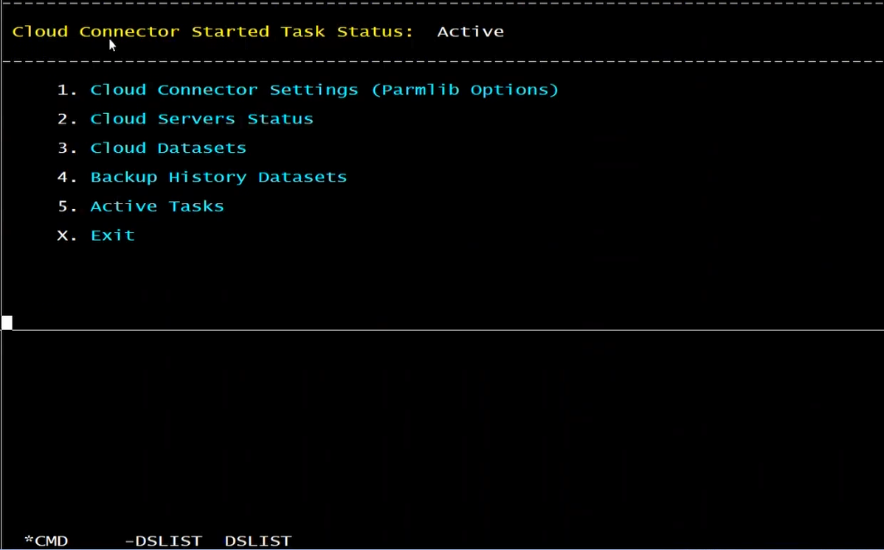

Figure 3 Software Driven Tape Solution is Installed

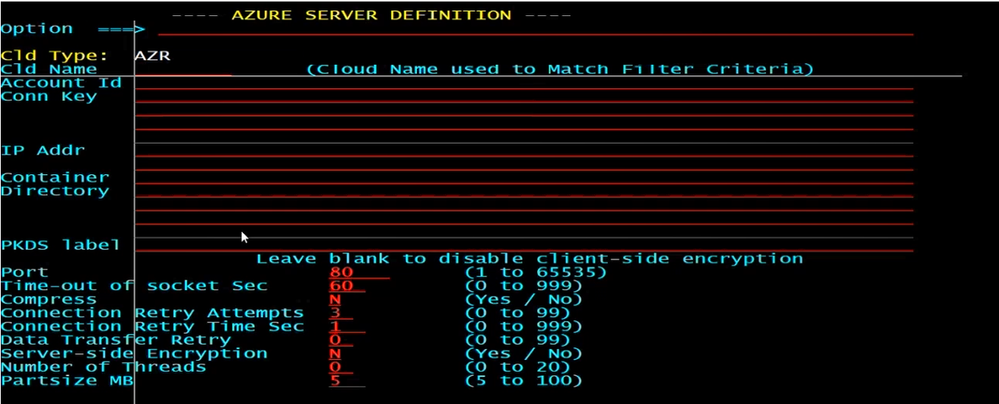

Create Azure Blob Storage Target

Azure blob storage instance is created and defined within the tape solution software as the target for the selected tape volume’s storage.

Figure 4 Azure Storage Instance Identified

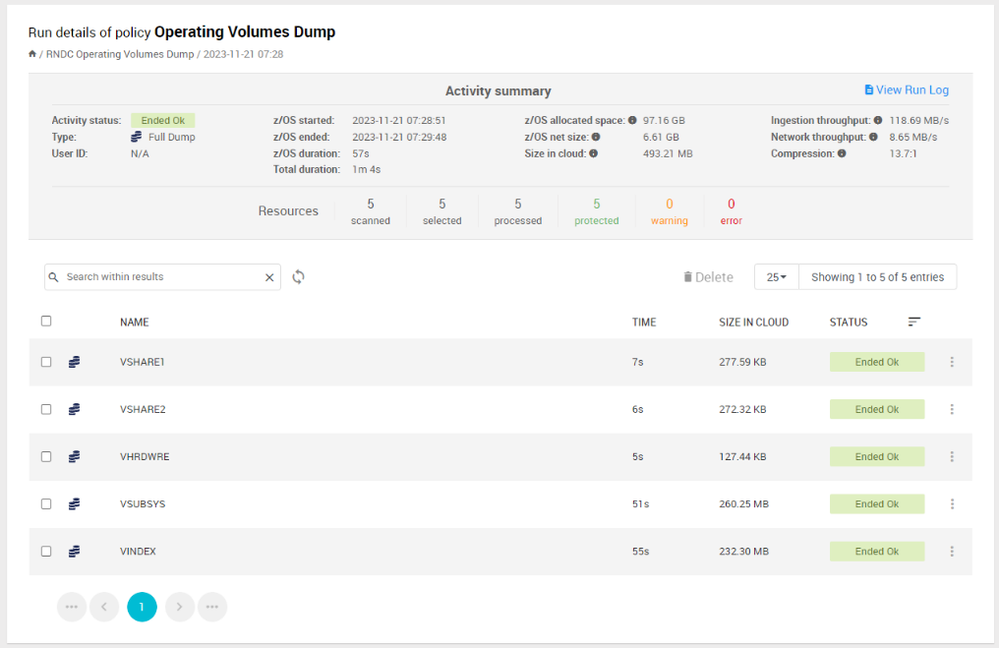

Figure 5 Mainframe Storage Volumes Landing in Azure Blob Storage

Back Up to and Recalling Data from the Mainframe

Mainframe Data is backed up to Azure with the help of a batch job using the corresponding backup utility. When the batch job is complete, the backup of the specified files will be on Azure blob storage in the defined location.

The process for recalling the files from Azure to the Mainframe is like backing up the data on to Azure. When a job requests for a tape that is on azure, the backup utility will automatically restore it from Azure.

Utilizing Tape Data Stored in Azure Blob Storage

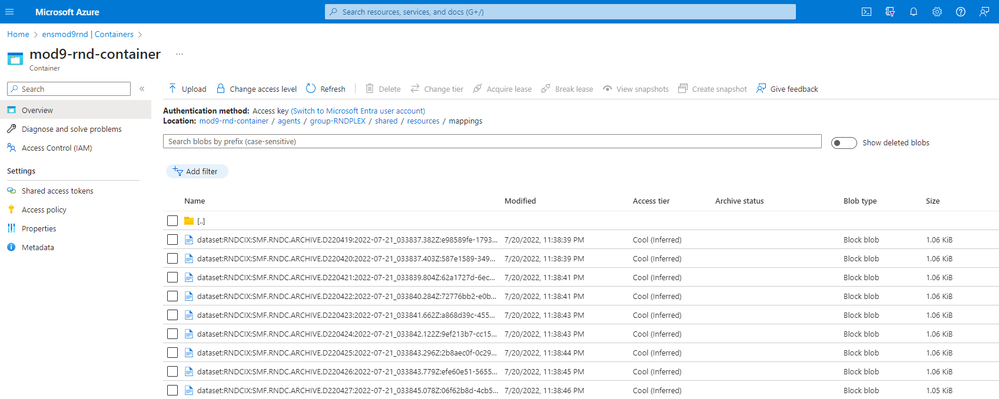

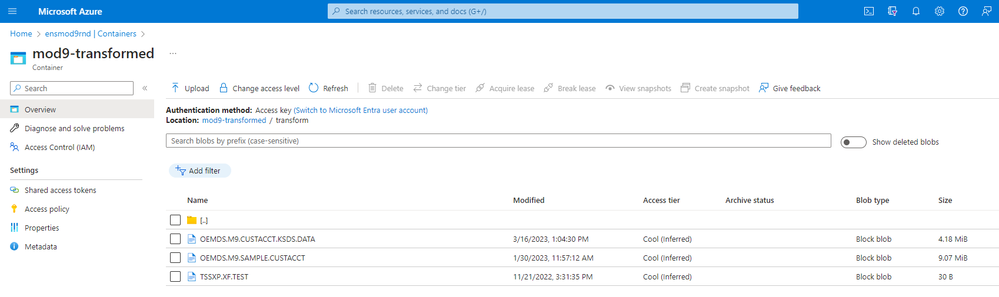

Once the data is sent to Azure Blob Storage, it can be left to meet long-term retention requirements or converted for further use by Azure Services (including AI/ML use cases) depending on the solution chosen to back up data to Azure. For usage in Azure, the data would need to be converted to ASCII format.

Figure 6 Data in EBCIDIC format in Azure Blob Storage

Figure 7 Data Converted to ASCII in Azure Blob Storage

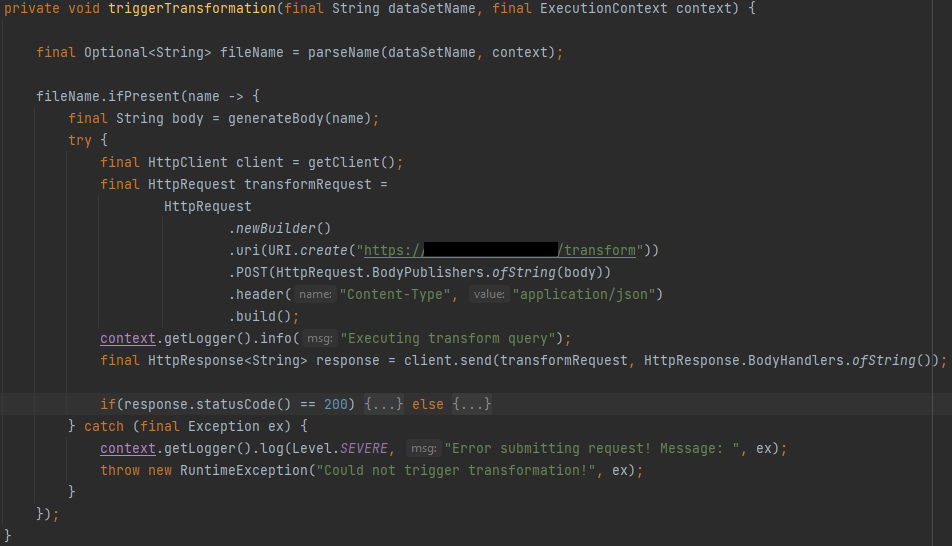

Converting the Data using an Azure Function

Azure functions help trigger the conversion of the data and then the converted data is sent for further use by Ensono stacks (AI analytics) or Power BI (example below):

Figure 8 Azure Function Triggering Conversion of Data

Figure 9 Data sent for further AI/BI use

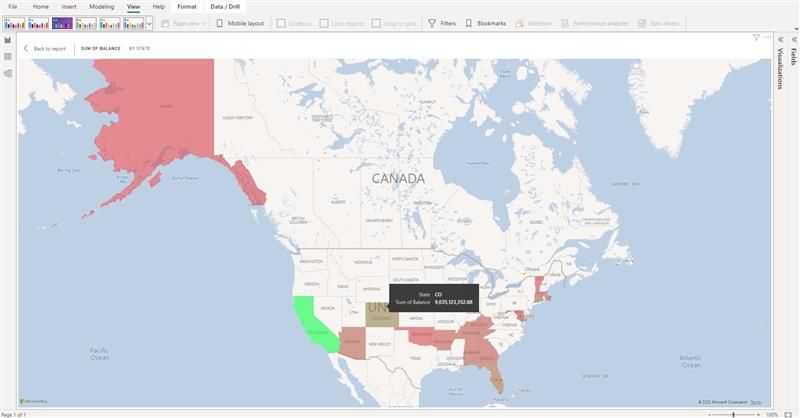

Figure 10 Power BI View of Data - 1

Figure 11 Power BI View of Data - 2

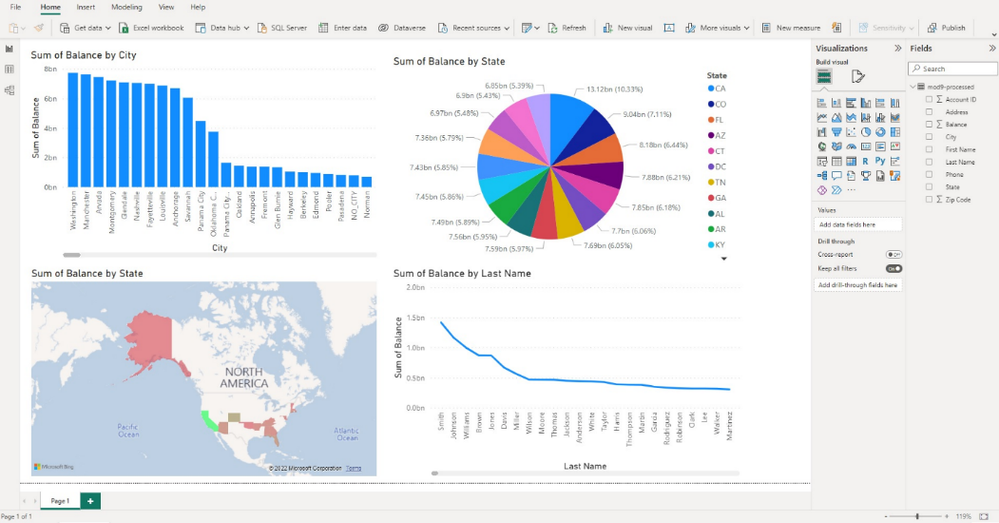

Leveraging Data and AI Services with Ensono Stacks

The Ensono Stacks Data Platform delivers a modern Lakehouse solution, based upon the medallion architecture, with Bronze, Silver and Gold layers for various stages of data preparation. The platform utilizes tools including Azure Data Factory for data ingestion and orchestration, Databricks for data processing and Azure Data Lake Storage Gen2 for data lake storage. It provides a foundation for data analytics and reporting through Microsoft Fabric and Power BI.

Key elements of the solution include:

- Infrastructure as code (IaC) for all infrastructure components (Terraform).

- Deployment pipelines to enable CI/CD and DataOps for the platform and all data workloads.

- Data ingest pipelines that transfer data from a source into the landing (Bronze) data lake zone.

- Data processing pipelines performing data transformations from Bronze to Silver and Silver to Gold layers.

The solution utilizes the Stacks Data Python library, which offers a suite of utilities to support:

- Data transformations using PySpark.

- Frameworks for data quality validations and automated testing.

- The Datastacks CLI - a tool enabling developers to quickly generate new data workloads.

Ensono Stacks Architecture

Figure 12 Diagram showing high-level architecture for the standard Ensono Stacks Data Lake

The deployed platform can integrate with Microsoft Fabric to provide a suite of analytics tools and capabilities. The solution is designed to be deployed within a secure private network - for details see infrastructure and networking.

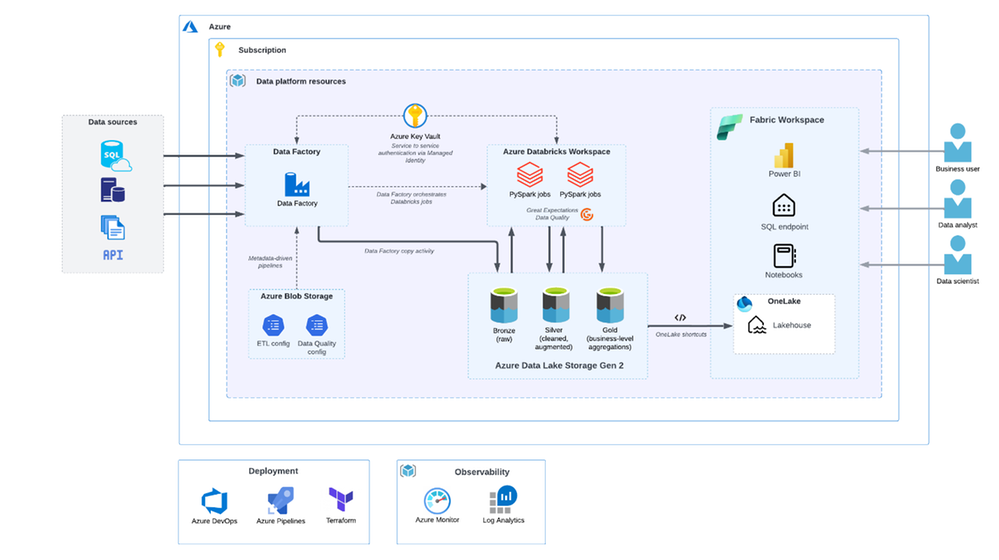

The following shows the converted data from our Virtual Tape Solution being ingested into a data pipeline for use:

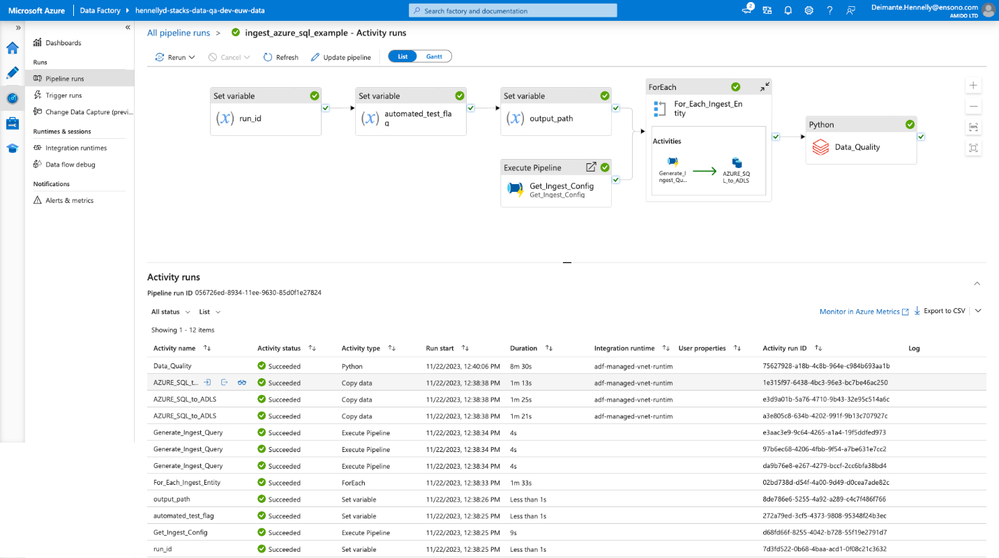

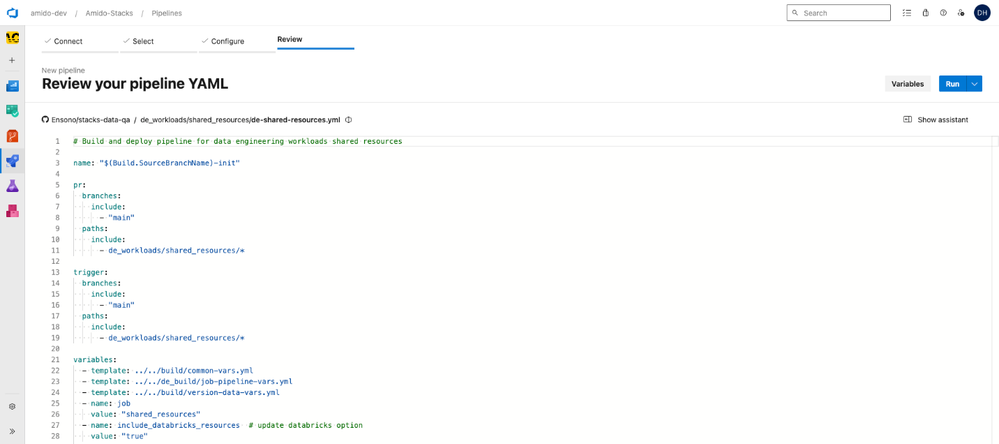

Figure 13 Diagram showing deployment pipeline from Azure DevOps

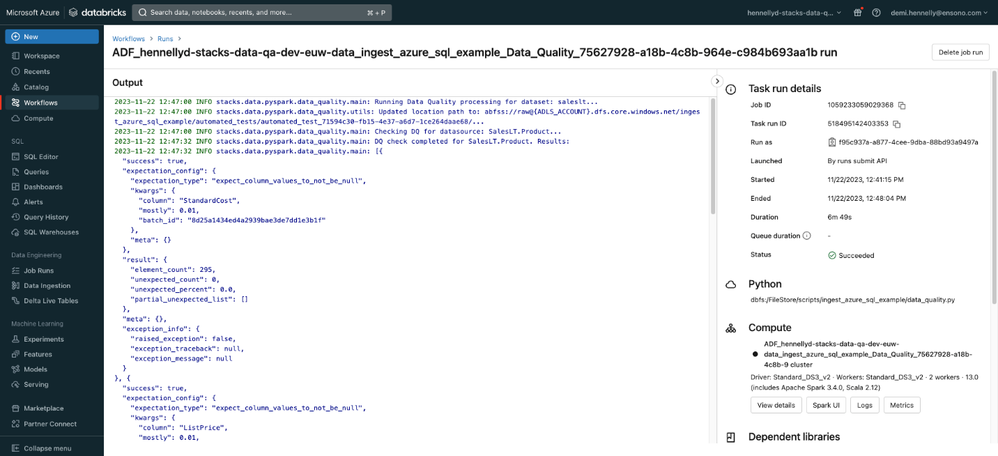

Figure 14 Diagram showing AzureDataFactory (ADF) doing ingestion and Data quality checks.

Figure 15 Diagram showing AzureDataFactory (ADF) output

Figure 16 Diagram showing Azure DevOps pipeline deploying data bricks resources

Ensono Cloud-Connected Mainframe for Microsoft Azure Reference Architecture Diagram

Figure 17 Reference Architecture

Regional Availability:

There are no location specific requirements for the solution. Customer scenarios sensitive to latency would require the use of ExpressRoute Local connectivity to achieve low latencies as described above. ExpressRoute Local connectivity is available in the regions specified here: Locations and connectivity providers for Azure ExpressRoute | Microsoft Learn

See More:

More information on the Ensono solution can be found on Azure Marketplace here: Ensono Cloud-Connected Mainframe for Microsoft Azure – Microsoft Azure Marketplace

Authors:

Venkat Ramakrishnan - Senior Technical Program Manager, Microsoft

Shelby Howard – Ensono Senior Sales Executive

Rob Caldwell – Ensono Director Product Management, Cloud Solutions

Gordon McKenna – VP Ensono Digital, Cloud Evangelist & Alliances

Published on:

Learn moreRelated posts

A Look Ahead at Azure Cosmos DB Conf 2026: From AI Agents to Global Scale

Join us for Azure Cosmos DB Conf 2026, a free global, virtual developer event focused on building modern applications with Azure Cosmos DB. Da...

Announcing general availability of Azure Confidential Computing (ACC) virtual machines for U.S. government environments

Government agencies have an increased need for secure, verifiable, and compliant cloud environments that adhere to data sovereignty regulation...

What is Azure SRE Agent

Azure Developer CLI (azd): One command to swap Azure App Service slots

The new azd appservice swap command makes deployment slot swaps fast and intuitive. The post Azure Developer CLI (azd): One command to swap Az...