Enrich your Data Estate with Fabric Pipelines and Azure OpenAI

The benefits of Generative AI is of huge interest for many organisations and the possibilities seem endless. One such interesting use case is the ability to leverage Azure OpenAI models in data pipelines to create or enrich existing data assets.

The ability to integrate Azure OpenAI into Fabric data processing pipelines enables numerous integration scenarios to either create new datasets or augment existing datasets to support downstream analytics. As a simple example, a generative AI natural language model could be used to gather additional information about zip codes such as demographics (population, occupations etc) and this could in turn be ingested and conditioned to enrich the data.

The following example demonstrates how Fabric pipelines can be integrated with Azure OpenAI using the pipeline Web activity whilst also leveraging Azure API Management to provide an additional management and security layer. I am a big fan of API Management in front of any internal or external API services due to capabilities such as authentication, throttling, header manipulation and versioning. Further guidance on Azure OpenAI and API Management is described here Build an enterprise-ready Azure OpenAI solution with Azure API Management - Microsoft Community Hub.

The Fabric pipeline and Azure OpenAI flow is as follows:

- Extract data element from Fabric data warehouse (in this case, this is 'zip code')

- Pass the value into an Azure OpenAI natural language model (GPT 3.5 Turbo) via Azure API Management

- The GPT 3.5 Turbo model (which understands and generates natural language and code) returns information, back to the Fabric pipeline, based on the zip code; in this example population information is returned to the Fabric pipeline where the data can either be further processed and persisted to storage.

Fabric pipelines provide excellent range of integration options. The Web activity, coupled with dynamic processing in Fabric, is extremely powerful Web activity - Microsoft Fabric | Microsoft Learn and enables a range of API calls (GET, POST, PUT, DELETE and PATCH) to web services. Please note, the same functionality can be achieved in Azure Data Factory pipelines.

The diagram below illustrates the simple Fabric pipeline flow and activities.

Figure 1.0 Microsoft Fabric Pipeline integrating Azure OpenAI

The initial Script activity extracts a source data attribute, in this case a zip code, from the Fabric OneLake data warehouse. The output is persisted in a parameter varQuestionParameter. In this example, an intermediate variable is used for debugging purposes and can be removed later if needed.

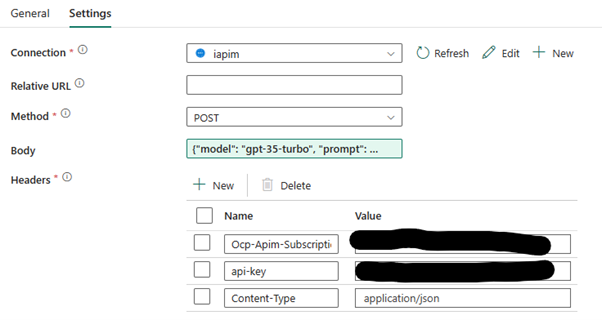

The pipeline Web activity is easily configured using a POST method (to the Azure OpenAI natural language model) via API Management using an APIM subscription key, API key and Content-Type as shown below.

Figure 2.0 Microsoft Fabric Pipeline Web Activity configuration

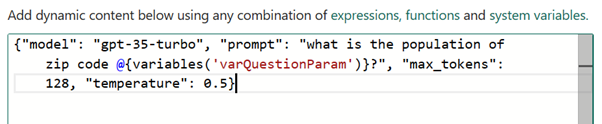

The body of the API POST is dynamically constructed using parameters as shown below.

Figure 3.0 Microsoft Fabric Pipeline Web Activity dynamic content

Dynamic expressions in Fabric pipelines are incredibly powerful and allow run-time configuration of activities, connections and datasets.

In the example shown above, max_tokens is a configurable parameter which specifies the maximum number of tokens (segmented text strings) that can be generated in the chat completion. Occasionally it is necessary to increase the value. For example, consider setting the max_token value higher to ensure that the model does not stop generating text before it reaches the end of the message.

In contrast, (sampling) temperature is used to control model creativity. A higher temperature (e.g., 0.7) results in more diverse and creative output, while a lower temperature (e.g., 0.2) makes the output more deterministic and focused. Examples of values and definitions can be found here Cheat Sheet: Mastering Temperature and Top_p in ChatGPT API - API - OpenAI Developer Forum.

The output of the model is passed back to the Fabric Web Activity which can then be persisted in the Fabric OneLake or other storage destination. This is just a simple example demonstrating how easy it is to introduce Generative AI scenarios into data integration pipelines.

Please post if you have questions/comments, or if you are exploring data pipeline and generative AI integration scenarios to enable new insights.

References

- Fabric Pipelines Ingest data into your Warehouse using data pipelines - Microsoft Fabric | Microsoft Learn

- Fabric Pipelines vs. Azure Data Factory Differences between Data Factory in Fabric and Azure - Microsoft Fabric | Microsoft Learn

- Azure OpenAI Service Models Azure OpenAI Service models - Azure OpenAI | Microsoft Learn

- Azure OpenAI and API Management Build an enterprise-ready Azure OpenAI solution with Azure API Management - Microsoft Community Hub.

- Azure Architecture Center Azure Architecture Center - Azure Architecture Center | Microsoft Learn

Published on:

Learn moreRelated posts

Introducing Azure HorizonDB - PostgreSQL

Run enterprise Postgres workloads on Azure HorizonDB with around 3x the throughput of self-managed deployments — zone-resilient by default, no...

Azure DevOps and GitHub: Journeying into the AI Era

AI is changing how software gets planned, built, and reviewed. As teams adopt agentic development, the platform underneath those workflows mat...

Introducing azure-functions-skills: An AI-Era Workspace for Azure Functions (Preview)

azure-functions-skills gives GitHub Copilot CLI, Claude Code, Codex CLI, and VS Code the skills, MCP configuration, hooks, and instructions ne...

Announcing the Public Preview of Integrated Embeddings in Azure Cosmos DB: Build AI Apps With Embeddings That Stay in Sync

AI applications built on Azure Cosmos DB depend on embeddings for grounded results. Keeping them in sync with your data is the hard part: it m...

Introducing OmniVec: An Open-Source Embedding Platform for AI Apps on Azure

Today we are open-sourcing OmniVec, a platform for building and operating the embedding pipelines that keep the vector representation of your ...